A technical and in-depth analysis of the trade-offs that Ethereum’s consensus mechanism makes in its switch to proof-of-stake and how proof-of-work differs.

This is an opinion editorial by Scott Sullivan.

Normally Bitcoiners don’t care too much about what goes on in Shitcoin-land, but now that Ethereum has merged to proof-of-stake (PoS), there’s been quite the buzz on Bitcoin Twitter. Of course, the Bitcoin network itself will remain unaffected, but I think this “upgrade” is still worth paying some attention to. Now that Ethereum has cleansed itself of the “dirty” and “wasteful” externalities associated with proof-of-work (PoW), we can expect the gloves to come off in the narrative war, and I think Bitcoiners should be ready to punch back.

Learning how PoS works is a really good way to internalize the differences and trade-offs between PoW and PoS. Even though I had seen all the high-level arguments against PoS before — that PoS is more permissioned, centralizing, and oligarchical — I’ll admit that without looking into the details, it all felt kind of hand-wavy. By actually diving into the PoS algorithm, we can begin to see how all these properties naturally emerge from first principles. So if you’re curious about how the PoS algorithm works, and why it leads to these kinds of properties, then read on!

Solving The Double-Spend Problem

Let’s start with a quick recap of the problem we’re trying to solve. Suppose we have a large group of participants in a cryptocurrency network trying to maintain a decentralized ledger. Here’s the problem: How can new transactions be added to everyone’s ledger, such that everyone agrees on which new transactions are “correct”? PoW solves this problem quite elegantly: Transactions are grouped together in blocks, whereby each block takes a large amount of computational work to produce. The amount of work required can move up or down to ensure blocks are produced every ten minutes on average, giving each new block plenty of time to propagate throughout the network before the next one is created. Any ambiguity is resolved by selecting the chain with the most work, and double-spending is prevented due to requiring at least 51% of the global hashpower for a double-spend block to catch up.

But suppose now we want to throw away Satoshi Nakamoto’s key insight that made all of this possible in the first place. After all, those pesky ASICs are loud and annoying, and they consume more energy than all of George Soros, Bill Gates and Hillary Clinton’s private jets combined. Is there some way we can unambiguously agree on which transactions are true just by talking it out?

Ethereum’s proof-of-stake proposes to solve this problem using two key ingredients. The first is to make special “checkpoint blocks” every now and then, whose purpose is to give assurance to everyone in the network about the “truth” of the system at various points in time. Creating a checkpoint requires a two-thirds majority vote by stake, so there is some assurance that the majority of validators agreed on what the truth actually was at that point in time. The second ingredient is to punish users for adding ambiguity to the network, a process known as “slashing.” For example, if a validator were to create a fork, or vote on an older sidechain (similar to a 51% attack), then their stake would get slashed. Validators can also be slashed for inactivity, but not as much.

This leads us to our first principle behind PoS, which is that PoS is based on a negative (penalty-based) incentive system.

This contrasts heavily with Bitcoin and proof-of-work, which is a positive (reward-based) incentive system. In Bitcoin, miners can attempt to break the rules — badly formatted blocks, invalid transactions, and so on — but these blocks will just get ignored by full nodes. The worst-case scenario is a bit of wasted energy. Miners are also free to build on older blocks, but without 51% of the hashpower, these chains will never catch up, again just wasting energy. Any miner who participates in these actions, whether intentionally or not, need not worry about losing their accumulated bitcoin or mining machines, but they won’t get new rewards. Rather than live in fear, bitcoin miners can err on the side of taking action and risk.

The world is a very different place for validators living in Ethereum-land. Instead of working hard and being rewarded for adding security to the network, validators do no actual work, but must be careful that their node never misbehaves, lest they watch their savings go up in flames. If any proposed changes were made to the network, a validator’s first instinct would be to comply with whatever everyone else was doing, or else risk getting slashed. To be a validator is like walking on eggshells everyday.

By the way, living under a negative incentive system is one of the, ahem, “benefits” of proof-of-stake, according to the Ethereum network’s co-founder Vitalik Buterin’s FAQ:

So how would slashing actually work on a technical level? Wouldn’t we need to first create a list of all the validators, in order to have something to slash in the first place? The answer is yes. To become a validator in Ethereum, one must first move ETH into a special “staking” address. Not only is this list needed for slashing, but also for voting since a two-thirds majority vote is needed for checkpoint blocks.

There are some interesting implications to maintaining a list of all validators at all times. How hard is it to join? How hard is it to leave? Do validators get to vote on the status of other validators?

This brings us to our second principle behind PoS, which is that PoS is a permissioned system.

The first step in becoming a validator is to deposit some ETH into a special staking address. How much ETH? The minimum required is 32 ETH, or about $50,000 at the time of this writing. For context, a decent bitcoin mining rig typically runs in the single-digit thousands of dollars, and a home miner can start with a single S9 for a few hundred bucks. To be fair, ETH’s high entry fee has a technical justification, since a higher stake means fewer validators, which lowers bandwidth.

So the deposit fee is high, but at least anyone who owns 32 ETH is free to join or leave at any time, right? Not quite. There are security risks if large coalitions of validators were to all enter or exit at the same time. For example, if a majority of the network all left at once, then they could double-spend a finalized block by replaying a fork in which they never left, without getting slashed on either chain. To mitigate this risk, the on- and off-ramps have a built-in throughput limit. Currently this limit is set to max(4,|V|/65536) validators per epoch (every 6.4 minutes), and is the same for both entering and leaving. This translates roughly to one full validator set every ten months.

By the way, even though it’s currently possible for validators to publish an “exit” transaction and stop validating, the code to actually withdraw funds hasn’t even been written yet. Sounds a bit like “Hotel California” …

There is one last point about the incentives behind approving new validators. Suppose you were a shareholder in a large and stable company paying regular dividends every quarter. Would it make sense to give new shares away for free? Of course not, since doing so would dilute the dividends of all existing shareholders. A similar incentive structure exists in PoS, since each new validator dilutes the revenue of all existing validators.

In theory, validators could simply censor every single transaction that adds a new validator; however, in practice, I think such a blunt approach would be unlikely. This would be very noticeable and would destroy Ethereum’s image of “decentralization” overnight, potentially crashing the price. I think a more subtle approach would be used instead. For example, the rules could slowly change over time making it harder to become a validator, with excuses being offered such as “security” or “efficiency.” Any policies that enrich existing validators at the expense of new validators would have financial tailwinds, whether spoken out loud or not. We can start to see why PoS would tend towards oligarchy.

Overview Of The Casper Algorithm

Now that we know the high-level strategy behind PoS, how does the algorithm actually work? The main ideas behind checkpoints and slashing were put forward in an algorithm called Casper, so we’ll start there. Casper itself doesn’t actually specify anything about how to produce blocks, but rather provides a framework for how to superimpose a checkpoint/slashing strategy on top of some already-existing blockchain tree.

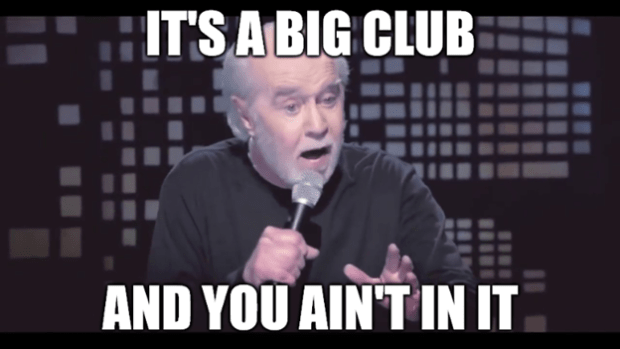

First, some arbitrary constant (C) is chosen to be the “checkpoint spacing” number, which determines how many blocks occur between checkpoints; for example, if C=100 then checkpoints would occur at blocks 0, 100, 200, and so on. Then the nodes all vote on which checkpoint block should be the next “justified” checkpoint. Rather than vote on single blocks in isolation, validators actually vote on (s,t) checkpoint pairs, which link some previously justified checkpoint source “s” to some new target checkpoint “t.” Once a checkpoint link (s,t) gets a two-thirds majority vote by stake, then “t” becomes a new justified checkpoint. The diagram below shows an example tree of checkpoints:

In this diagram, the h(b) function is referring to the “checkpoint height,” e.g., the block’s multiple of 100. You may have noticed that not every hundredth block is necessarily justified, which can happen if the vote failed at a certain height. For example, suppose at height 200 two separate checkpoints each received 50% of the vote. Since voting twice is a slashable offense, the system would get “stuck” unless some validators willingly slashed their own stake to achieve a two-thirds vote. The solution would be for everyone to “skip” checkpoint 200 and “try again” at block 300.

Just because a checkpoint is justified, does not mean it is finalized. In order for a checkpoint to count as finalized, it must be immediately followed by another justified checkpoint at the next possible height. For example, if checkpoints 0, 200, 400, 500 and 700 were all justified and linked together, only checkpoint 400 would count as “finalized,” since it is the only one immediately followed by another justified checkpoint.

Because the terminology is very precise, let’s recap our three categories. A “checkpoint” is any block which occurs at height C*n, so if C=100, every block with height 0, 100, 200, 300, and so on would all be checkpoints. Even if multiple blocks were created at height 200, they would both be “checkpoints.” A checkpoint is then “justified” if it’s either the root block at height 0, or if two-thirds of the validators voted to create a link between some previously justified checkpoint and the current checkpoint. A justified checkpoint is then “finalized” if it then links to another justified checkpoint at the next possible height. Not every checkpoint necessarily becomes justified and not every justified checkpoint necessarily becomes finalized, even in the final chain.

Casper Slashing Rules

The slashing rules in Casper are designed such that it is impossible for two finalized checkpoints to exist in two separate forks, unless at least one-third of the validators broke the slashing rules.

In other words, only finalized checkpoints should ever be counted as unambiguous “truth” blocks. It’s even possible for two justified checkpoints to occur on both sides of a fork, just not two finalized checkpoints. There’s also no guarantee about when or where the next finalized checkpoint will occur, just that if a chain split were to occur, then you should sit back and wait until a finalized block shows up somewhere, and once it does then you know that’s the “correct” chain.

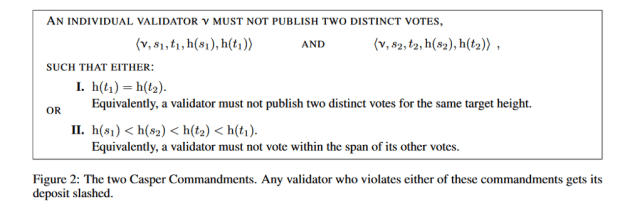

There are two slashing rules in Casper which enforce this property:

The first rule forbids anyone from double-voting on checkpoints with the same target height, so if a validator voted for two different checkpoint blocks with target height 200, that would be a slashable offense. The purpose of this rule is to prevent the chain from splitting into two different justified checkpoints with the same height, since this would require 2/3 + 2/3 = 4/3 of the total validator votes, implying that at least one-third of the validators broke the slashing rules. However, as we saw previously, it’s possible for justified checkpoints to “skip” certain block heights. What prevents a chain from splitting into different target heights? For example, couldn’t checkpoint 200 fork into justified checkpoints at 300 and 400 without anyone getting slashed?

That’s where the second rule comes in, which basically prevents validators from “sandwiching” votes inside other votes. For example, if a validator voted for both 300→500 and 200→700, that would be a slashable offense. In the case of a chain split, once one branch sees a finalized checkpoint, it becomes impossible for the other branch to see a justified checkpoint afterwards unless at least one-third of the validators broke rule #2.

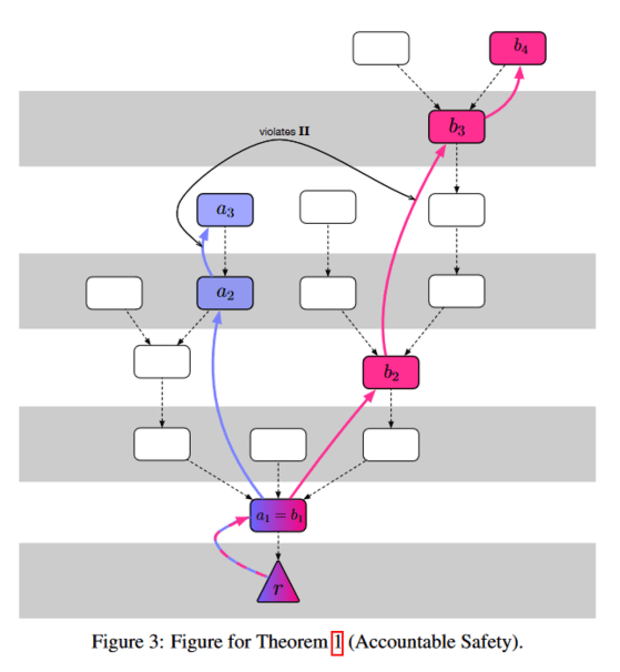

To see why, suppose the blockchain forked into justified checkpoints 500→800 and 500→900, then at some point the first chain saw a finalized checkpoint with link 1700→1800. Since both 1700 and 1800 can only be justified on fork #1 (assuming nobody broke the first slashing rule), the only way fork #2 could see a justified checkpoint after 1800 is if there was some voted-in link between heights H<1700 and H>1800. But since this vote would “sandwich” the 1700→1800 link and require a two-thirds vote, and the 1700→1800 already passed with a two-thirds vote, then at least one-third of the validators would need to break rule #2. The Casper paper has a nice diagram demonstrating this property:

And that’s it, just follow the Casper rules and you’re good!

Seems pretty simple, right? I’m sure PoS would only ever use slashing as an absolute last resort to maintain consensus, and not as an extortionary mechanism to pressure validators into behaving a certain way … right?

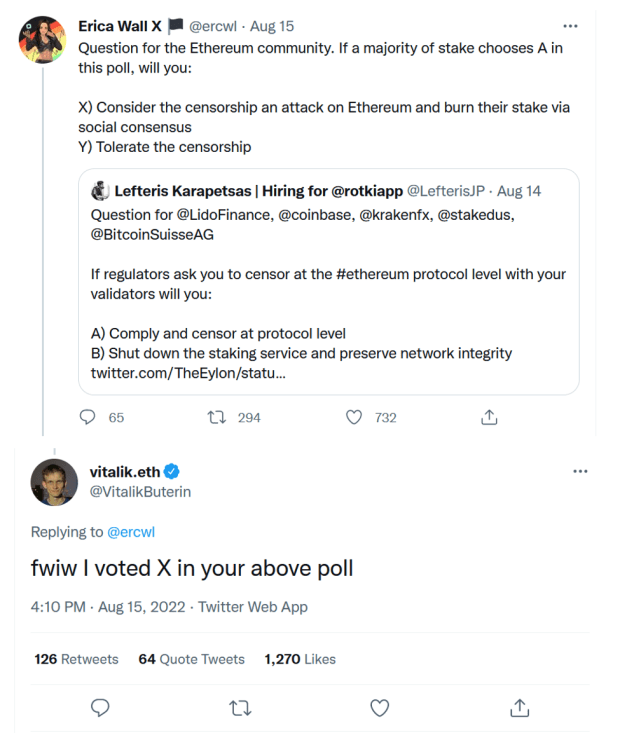

This brings us to our third principle behind PoS: There are no rules. The “rules” are whatever everyone else says they are.

One day your node could be technically following every Casper commandment to the letter, and the next day your savings could be slashed because you were doing something everyone else didn’t like. Approved a “team red” transaction that one time? Tomorrow the “team blue” majority might slash you. Or maybe you did the opposite and omitted too many “team red” transactions? Tomorrow the “team red” majority might slash you for censorship. The ability to slash goes far beyond the limited scope of OFAC (Office of Foreign Assets Control) censorship. PoS is like a nonstop Mexican standoff, where the implicit threat of slashing is ever-present at all times.

I wouldn’t be surprised if in a contentious hard fork, both sides hard-coded the validation rules of the other fork, just in case they wanted to punish anyone who joined the “wrong” side. Of course, this would be a nuclear option, and like nukes, each side might only choose to strike in retaliation. I would guess that most individual validators are neutral in that they would prioritize financial self-preservation over political self-sacrifice, but might outwardly take a side if they sensed that was the correct move to avoid getting slashed.

What Time Is It?

Now that we know the basics of checkpoints and slashing, we can move onto the actual algorithm used in Ethereum, called Gasper. This is a portmanteau of Casper, which we’ve already covered, and GHOST, a strategy for selecting the “best” chain of blocks in between checkpoints.

The first thing to understand about Gasper is that time itself is the main independent variable. Real-world time is divided into twelve-second units called “slots,” where each slot contains at most one block. These slots then form larger groups called “epochs,” where each epoch refers to one checkpoint. Each epoch contains 32 slots, making them 6.4 minutes long.

It’s worth noting that this paradigm flips the causal relation between time and block production when compared to PoW. In PoW, blocks are produced because a valid hash was found, not because enough time had passed. But in Gasper, blocks are produced because enough real-world time has passed to get to the next slot. I can only imagine the tricky timing bugs such a system may encounter, especially when it’s not just one program running on one computer, but tens of thousands of computers trying to run in sync all over the world. Hopefully, the Ethereum developers are familiar with the falsehoods programmers believe about time.

Now suppose you were starting up a validator node, and you were syncing the blockchain for the first time. Just because you observed that certain blocks referenced certain timestamps, how could you be sure that those blocks were really produced at those times? Since block production doesn’t require any work, couldn’t a malicious group of validators simulate an entirely fake blockchain from day one? And if you saw two competing blockchains, how would you know which is true?

This brings us to our fourth principle behind PoS, which is that PoS relies on subjective truth.

There is simply no objective way to pick between two competing blockchains, and any new nodes to the network must ultimately trust some existing source of truth to resolve any ambiguity. This contrasts significantly with Bitcoin, where the “true” chain is always the one with the most work. It doesn’t matter if a thousand nodes are telling you chain X, if a single node broadcasts chain Y and it contains more work, then Y is the correct blockchain. A block’s header can prove its own worth, completely removing the need for trust.

By relying on subjective truth, PoS reintroduces the need for trust. Now I’ll admit, I’m perhaps slightly biased, so if you want to read the other side, Buterin wrote an essay containing his views here. I will admit that in practice, a chain split doesn’t seem all that likely given the Casper rules, but regardless, I do get some peace of mind knowing that this isn’t even a possibility in Bitcoin.

Block Production And Voting

Now that we’re familiar with slots and epochs, how are individual blocks produced and voted on? At the beginning of each epoch, the full validator set is “randomly” partitioned into 32 groups, one for each slot. During each slot, one validator is “randomly” chosen to be the block producer, while the others are chosen to be the voters (or “attestors”). I’m putting “randomly” in quotes because the process must be deterministic, since everyone must unambiguously agree on the same validator sets. However this process must also be non-exploitable, since being the block producer is a highly privileged position due to the extra rewards available from miner extractable value (MEV), or as it’s being renamed, “maximum extractable value.” “Ethereum Is A Dark Forest” is a great read on this.

Once a block is produced, how do the other validators vote or “attest” to it? Block proposal is supposed to happen within the first half (six seconds) of a slot, and attesting within the second half, so in theory there should be enough time for the attestors to vote on their slot’s block. But what happens if the block proposer is offline or fails to communicate or builds on a bad block? The job of an attestor is not necessarily to vote on that slot’s block, but rather whichever block “looks the best” from their view at that point in time. Under normal conditions this will usually be the block from that slot, but could also be an older block if something went wrong. But what does “look the best” mean, technically? This is where the GHOST algorithm comes in.

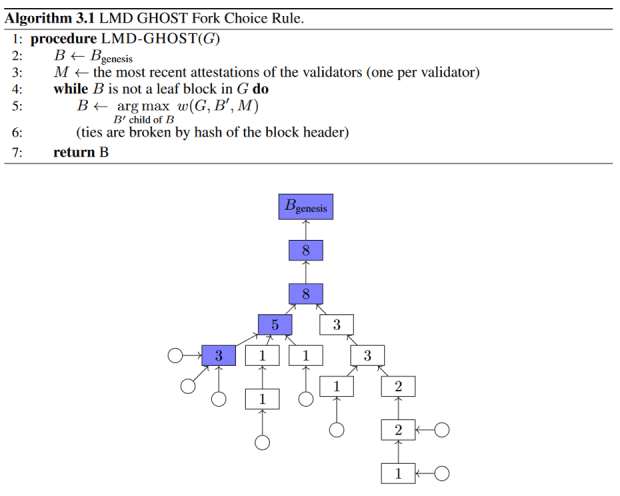

GHOST stands for “Greediest Heaviest Observed SubTree” and is a greedy recursive algorithm for finding the block with the most “recent activity.” Basically, this algorithm looks at all the recent blocks in the form of a tree, and walks down the tree by greedily selecting the branch with the most cumulative attestations on that entire subbranch. Only the most recent attestation of each validator counts towards this sum, and eventually this process lands on some leaf block.

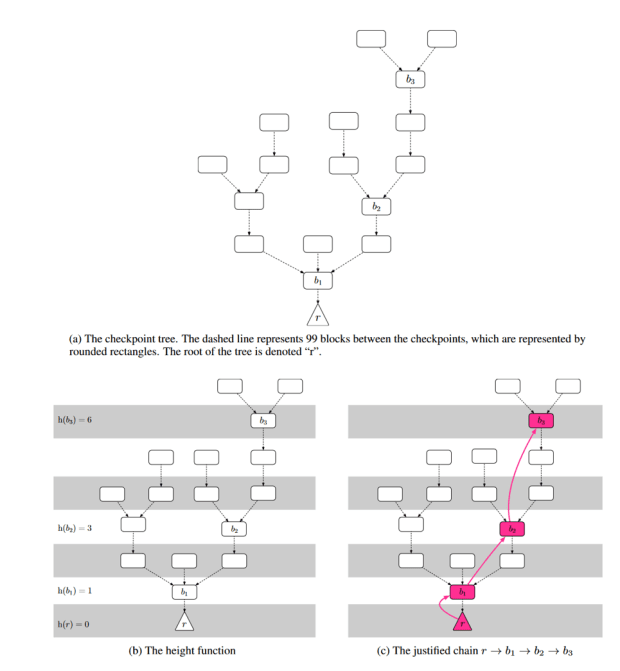

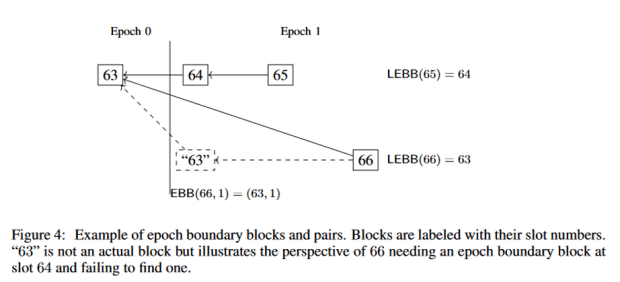

Attestations are not just votes for the current best block, but also the for the most recent checkpoint which lead to that block. It’s worth noting in Gasper, checkpoints are based on epochs rather than block heights. Each epoch refers to exactly one checkpoint block, which is either the block in that epoch’s first slot, or if that slot was skipped, then the most recent block before that slot. The same block can theoretically be a checkpoint in two different epochs if an epoch somehow skipped every single slot, so checkpoints are represented using (epoch, block) pairs. In the diagram below, EBB stands for “epoch boundary block” and represents the checkpoint for a specific epoch, while “LEBB” stands for “last epoch boundary block” and represents the most recent checkpoint overall.

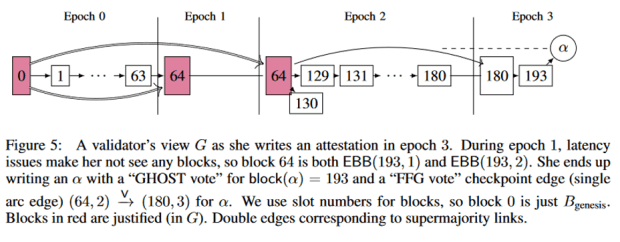

Similar to Casper, a checkpoint becomes justified once the total number of attestations passes the two-thirds threshold, and finalized if it was immediately followed by another justified checkpoint in the next epoch. An example of how this voting works is shown below:

There are two slashing conditions in Gasper, which are analogous to the slashing rules in Casper:

- No voting twice in the same epoch.

- No vote can contain epoch checkpoints which “sandwich” another vote’s epoch checkpoints.

Despite being based on epochs instead of block heights, the Casper rules still ensure that no two finalized checkpoints can occur on different chains unless one-third of the validators could be slashed.

It’s also worth noting that attestations are included in the blocks themselves. Similar to how a block in PoW justifies itself using its hash, a finalized checkpoint in PoS justifies itself using all of its past attestations. When someone does break the slashing rules, those bad attestations are included in a block which proves the violation. There’s also a small reward for the block producer who included the violation, in order to provide an incentive to punish rulebreakers.

Forks

It is interesting to think about what would happen in the case of a fork. To quickly recap, a fork refers to a change in the consensus rules, and they come in two varieties: hard forks and soft forks. In a hard fork, the new rules are not backwards-compatible, potentially resulting in two competing blockchains if not everyone switches over. In a soft fork, the new rules are more restrictive than the old rules, while keeping them backwards-compatible. Once over 50% of the miners or validators start enforcing the new rules, the consensus mechanism switches over without splitting the chain. Soft forks are generally associated with upgrades and new transaction types, but they also technically include any type of censorship enforced by a 51% majority. PoS also has a third type of “fork” not present in PoW: a chain split without any changes to the rules. But since we’ve already covered this, we’ll focus on hard and soft forks.

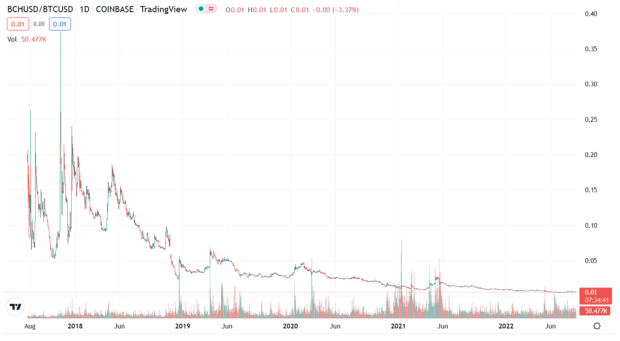

Let’s start with the simplest case: a standalone contentious hard fork. By contentious, I mean a rule change that divides the users politically. A bug fix or minor technical change likely wouldn’t be contentious, but something like changing the validation reward probably would be. If a hard fork was contentious enough, it could result in a chain split and would get resolved economically by users selling one chain and buying the other. This would be similar to the Bitcoin Cash split in 2017, which seems to have a clear winner:

Now suppose the validators were sitting around one day and decided they weren’t getting paid enough, and decided they should raise their rewards from 5% per year to 10% per year. This would be a clear trade-off in favor of the validators at the expense of non-validators who would now be getting more diluted. In the event of a chain split, which chain would win?

This leads to our fifth principle of PoS, which is that money is power.

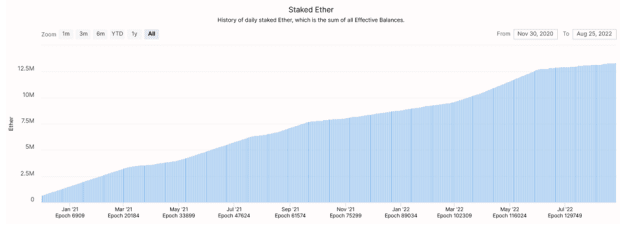

Out of the 120M ETH in existence, over 10% of that is currently being staked, as seen in the chart below:

Given a contentious hard fork between the validators and non-validators, assuming that all the non-validators market-sold the new chain and all the validators market-sold the old chain, then in theory the old chain would win, since the majority of ETH would still held by non-validators (90% versus 10%). But there’s a few more things to consider. First, after any chain split, the validators would still be “in control” of both blockchains. If the validators were able to influence the other chain, they might be incentivized to make it fail. Second, there’s also the nuclear option discussed earlier, whereby the new chain might slash anyone still validating the old chain to pressure them into joining. Finally, the validators would likely carry significant social and political influence over everyone else in the network. If Buterin, the Ethereum Foundation and the exchanges all decided in unison they were going to raise the staking reward, I find it difficult to believe that regular Ethereum users and validators could keep the old fork going while also making it more valuable through buying pressure.

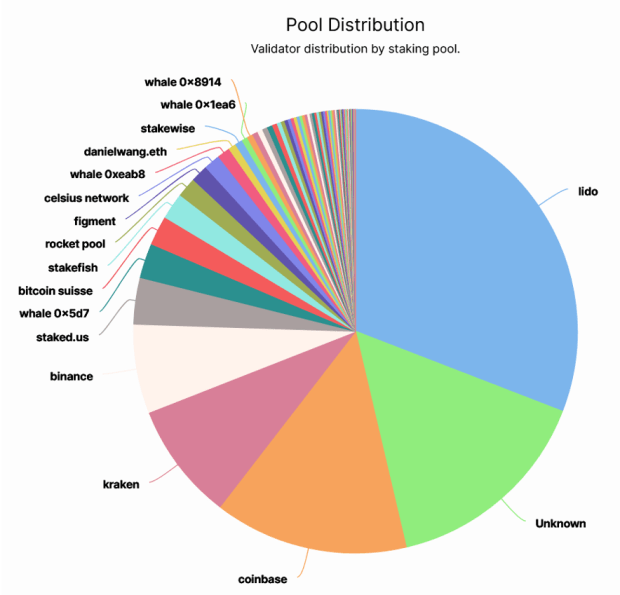

Moving on to soft forks, what would happen in a contentious soft fork, such as OFAC censorship? The validators are fairly centralized, as we can see in the chart below:

Unlike PoW where miners can switch pools at the press of a button, validators in Ethereum are locked into a staking address until they process an exit transaction. If Lido and the top exchanges were made to censor certain transactions, they could easily pass the two-thirds majority needed for deciding checkpoints. Earlier, we saw how Buterin and the other ETH validators could try to counter a censorship soft fork with their own counter-censorship hard fork, while slashing the censors in the process. Even if they succeeded in creating a fork, a lot of value would be destroyed in the process, both from the slashing and from a loss of trust.

Closing Thoughts

In this essay, we looked at how PoS solves the double-spend problem with Gasper, a combination of checkpoint/slashing rules called Casper, and a “best block” voting rule called GHOST. To recap, Gasper divides time into units called slots, where each slot can have at most one block, and the slots are grouped into epochs, where each epoch refers to one checkpoint. If a two-thirds majority votes on a checkpoint, it becomes justified, and if two justified checkpoints occur in a row, the first of those two checkpoints becomes finalized. Once a checkpoint becomes finalized, it becomes impossible for a parallel chain to be finalized, unless one-third of the validators could get slashed.

In this process we uncovered five principles of PoS:

- PoS uses a negative (penalty-based) incentive structure.

- PoS is a permissioned system.

- PoS has no rules.

- PoS relies on subjective truth.

- In PoS, money is power.

Each of these principles has opposite behavior in PoW:

- PoW uses a positive (reward-based) incentive system.

- PoW is a permissionless system (anyone can start or stop mining at any time).

- In PoW, forks which change the rules get ignored.

- PoW relies on objective truth.

- In PoW, miners serve the users and have little power themselves.

I believe everyone should strive to create the kind of world that they want to live in. If, like me, you want to live in a permissionless world where you can have control over your money, where hard work is rewarded and passive ownership is a liability and where your money will store its value far into the future without changing on a whim, then you may want to think carefully about the trade-offs between PoW and PoS, and fight in favor of the principles you want to live by.

This is a guest post by Scott Sullivan. Opinions expressed are entirely their own and do not necessarily reflect those of BTC Inc. or Bitcoin Magazine.